Facial expressions in NoldusHub

Which Facial expressions are available in NoldusHub?

The availability of different Facial expressions depends on your version of NoldusHub:

Emotional facial expressions

NoldusHub analyzes 7 facial emotion expressions from videos of your test participants. In addition, 3 affective facial expressions are analyzed (see below). This functionality uses the analysis options from FaceReader version 9 (Vicarous Perception Techologies bv, 2022).

NoldusHub classifies the following facial expressions of your test participant:

-

Happy

-

Sad

-

Angry

-

Surprised

-

Scared

-

Disgusted

-

Neutral

These emotional categories have been described by Ekman [1] as the basic or universal emotions. The expressions are visualized in a timeline and can be exported to log files. Each expression has a value between 0 and 1, indicating its

intensity. ‘0’ means that the expression is absent, ‘1’ means that it is fully present. The application has been trained using intensity values annotated by human experts.

Affective facial expressions

In addition to the basic emotional categories, the following affects are estimated based on facial expressions:

-

Interested

-

Bored

-

Confused

Affects are estimated using facial expressions over a time interval. For each frame, the estimate of the affect is

calculated using the current frame and all the previous frames in the time interval. To calculate interested, bored and confused the following time intervals are used:

-

Interested – 2 seconds.

-

Bored – 5 seconds.

-

Confused – 2 seconds.

At the start of the analysis, there is no time interval yet to analyze these affects. Therefore the visualization will display no data. The analysis starts when half the time interval has been reached. This means that the analysis of interested and confused starts 1 second after the start of the recording. The analysis of bored starts 2.5 seconds after the start of the recording.

The analysis of interested, bored, and confused is based on references [2], [3], and [4].

How does facial expression analysis work?

Facial expressions are classified according to the steps below.

- Face finding. The position of the face in an image is found using a deep learning based face-finding algorithm [5], which searches for areas in the image having the appearance of a face at different scales.

- Face modeling. FaceReader uses a facial modeling technique based on deep neural networks [6]. It synthesizes an artificial face model, which describes the location of 468 key points in the face. It is a single pass quick method to directly estimate the full collection of landmarks in the face.

After the initial estimation, the key points are compressed using Principal Component Analysis. This leads to a highly compressed vector representation describing the state of the face. - Face classification. Then, classification of the facial expressions takes place by a trained deep artificial neural network to recognize patterns in the face [7]. FaceReader directly classifies the facial expressions from image pixels. Over 20,000 images that were manually annotated were used to train the artificial neural network.

Validation

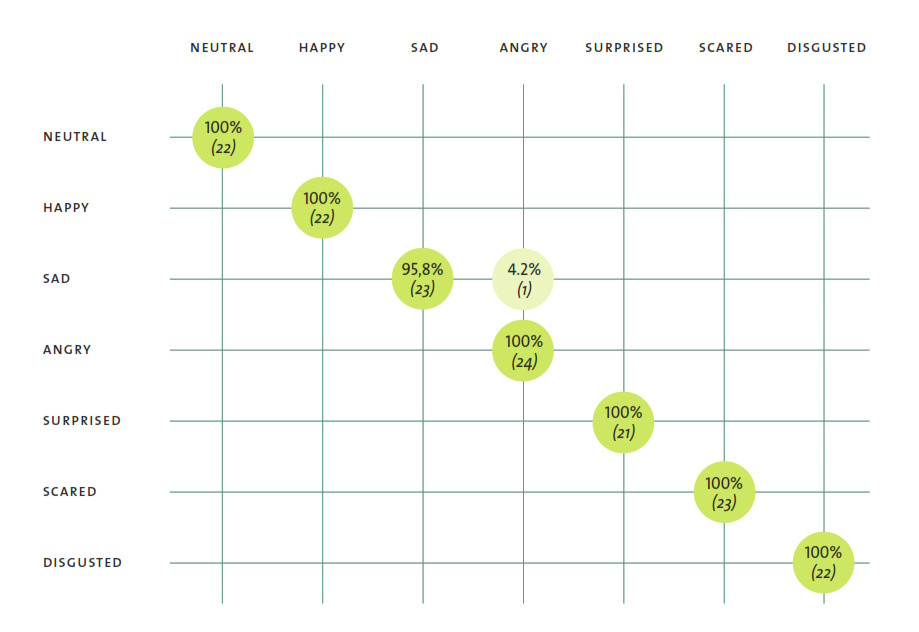

Facial expression analysis has been compared with those of intended expressions. The figure below shows the results of a comparison between the analysis in FaceReader version 9, from which the expressions are delivered through API to NoldusHub, and the intended expressions in images of the Amsterdam Dynamic Facial Expression Set (ADFES) [7]. The ADFES is a highly standardized set of pictures containing images of eight emotional expressions. The test persons in the images have been trained to pose a particular expression and the images have been labeled accordingly by the researchers. Subsequently, the images have been analyzed in FaceReader. As you can see, FaceReader classifies all ‘happy’ images as ‘happy’, giving an accuracy of 100% for this expression.

References

- Ekman, P. (1970). Universal facial expressions of emotion. California Mental Health Research Digest, 8, 151-158.

- McDaniel, B., D'Mello, S., King, B., Chipman, P., Tapp, K., & Graesser, A. (2007, January). Facial features for affective state detection in learning environments. In Proceedings of the Cognitive Science Society (Vol. 29, No. 29).

- Kapoor, A., Mota, S., & Picard, R. W. (2001, November). Towards a learning companion that recognizes affect. In AAAI Fall symposium (pp. 2-4).

- Grafsgaard, J., Wiggins, J. B., Boyer, K. E., Wiebe, E. N., & Lester, J. (2013, July). Automatically recognizing facial expression: Predicting engagement and frustration. In Educational Data Mining 2013.

- Bulat, A.; Tzimiropoulos, G. (2017). How far are we from solving the 2d & 3d face alignment problem? (and a dataset of 230,000 3d facial landmarks). In Proceedings of the IEEE International Conference on Computer Vision 2017 (pp. 1021-1030).

- Gudi, A.; Tasli, H.E.; Den Uyl, T.M.; Maroulis, A. (2015). Deep learning based facs action unit occurrence and intensity estimation. In 2015 11 th IEEE international conference and workshops on automatic face and gesture recognition (FG) 2015 May 4 (Vol. 6, pp. 1-5).

- Schalk, J. van der; Hawk, S.T.; Fischer, A.H.; Doosje, B.J. (2011). Moving faces, looking places: The Amsterdam Dynamic Facial Expressions Set (ADFES). Emotion, 11, 907-920. DOI: 10.1037/a0023853.

More information

More information on facial expression analysis and validation is present in the FaceReader Methodology Note which can be obtained from your Noldus sales representative.

No Comments